Does AI have an image problem?

Posted on Jun 30, 2020 by Matthew Gooding

While artificial intelligence has the potential to bring about massive positive changes in society, the emergence of powerful and complex algorithms is not without its drawbacks. Here, Matthew Gooding talks to four experts to discuss the challenges facing those working in this field

Cambridge is awash with artificial intelligence companies. Barely a week goes by without a start-up announcing it will use machine learning to revolutionise a hitherto untouched area of our lives.

But while entrepreneurs and investors have rushed to embrace the potential of machine learning, with over £800m of funding received by British AI businesses in the first six months of 2019 alone, consumers are not so sure.

Research from the Edelman Centre of AI Expertise, commissioned earlier this year in conjunction with the World Economic Forum, found that 54% of the general public believe AI development will hurt the poor, while 71% are concerned it will lead to a loss of human intelligence. Meanwhile, 60% of those polled feel greater regulation is critical to AI’s continued safe development.

Like many emerging technologies, AI faces a battle to convince people that its benefits outweigh its drawbacks, and the depictions of powerful and rogue AIs often found in popular culture add another level of confusion.

Here, four leading lights from the Cambridge AI scene tell Cambridge Catalyst about some of the misconceptions and challenges facing their industries.

Could AI enslave humans?

“It’s very difficult to build something that’s completely flawless,” says Tactful AI’s CEO Mohamed Elmasry, when I ask him if there’ll ever be an artificial intelligence that can rule the world. And after “15 to 20 years” working in the industry, he should know.

“No one was calling it AI when I started,” he recalls. “We were talking about things like neural networks. The first projects I worked on were about controlling motors or small power stations, trying to get them to think like humans so they’d know when to speed up or slow down.”

This kind of relatively rudimentary task is a long way from the all conquering computers depicted in films like Terminator and The Matrix, and despite the hype, Mohamed expects this kind of general AI to remain firmly in the realm of science fiction.

“You would need a lot of changes in the way computers work,” he says. “Different hardware, layers and layers of different software, a new type of programming. With the current technologies, I can’t see a path to that.

“For an AI to work properly you have to put in a lot of effort to train it, and because of this it’s good for specific processes. But a machine will always lack the human capacity to know everything, and be limited by the information you give it – an AI can’t build something by itself. The standard of machine-to-machine communication you would need doesn’t exist, either.”

Though his background is in hardware and Internet of Things (IoT) systems, Mohamed’s day-to-day work at Tactful is now software focused. The company has built an AI system which helps businesses improve their engagement with clients.

“We’re trying to transform customer care,” he says. “We work with engagement teams to provide them with relevant information while they’re dealing with customers. Our platform uses natural language processing to follow what’s happening in a conversation between the end user and the customer care team, and provides specific pieces of relevant information. So if someone is asking for a phone upgrade, for example, their account contracts and upgrades they’re eligible for and provide the agent with the information they need to advise the customer.

A machine will always lack the human capacity to know everything, and be limited by the information you give it

A machine will always lack the human capacity to know everything, and be limited by the information you give it

“This helps the company offer a more efficient and personalised service, and improves customer satisfaction and engagement.”

Tactful started life in 2016 as an IoT business, but pivoted to its current product last year after Mohamed and his co-founders spotted a gap in the market. Its product is already in the hands of customers around the world, and last month the company agreed a deal with two major hotels in Saudi Arabia, Makkah Clock Royal Tower and Raffles Makkah Palace, which will use Tactful systems to help their guests.

Is AI biased?

Examples of AI reinforcing social biases are myriad, including an infamous occasion when a Google image recognition algorithm classified black people as gorillas. “Bias is a really important problem in AI,” says Libby Kinsey, a machine learning specialist and advisor at Iprova, the Cambridge company which uses AI to help companies develop new inventions. “It occurs when people make generalised claims which aren’t backed up by sufficient data, or data which has been wrongly applied to all user groups.

“But we’re all a bit biased, and I think these cases shine a light on problematic areas for humans in general. In the industry we’re starting to see a move away from the old Facebook mantra of ‘move fast and break things’ – people are becoming more conscious about how they use data and there’s a lot of work going on around establishing good practice.”

Iprova’s team of invention developers (surely a candidate for the world’s best job title?) uses AI to find the most promising opportunities. Our system helps them to make those connections.”

Libby’s background is in venture capital, but she retrained in machine learning after seeing a rapid rise in opportunities in the sector. “When I tell people I work in AI, reactions tend to fall into the extreme positive or extreme negative category,” she says.

“One of the most common fears is around loss of jobs, but I think machine learning has the potential to transform roles, rather than replace them, because it’s good at doing the routine, boring tasks that humans find difficult.

“There was a time, around 2014 to 2016, where people were simply saying, ‘there’s AI, what can we do with it?’. Now I think people are becoming more aware of what it can do and thinking more about how it can be translated into value for businesses.”

Iprova is based at the Bradfield Centre on Cambridge Science Park, and is currently hiring across a range of roles.

Is AI unethical?

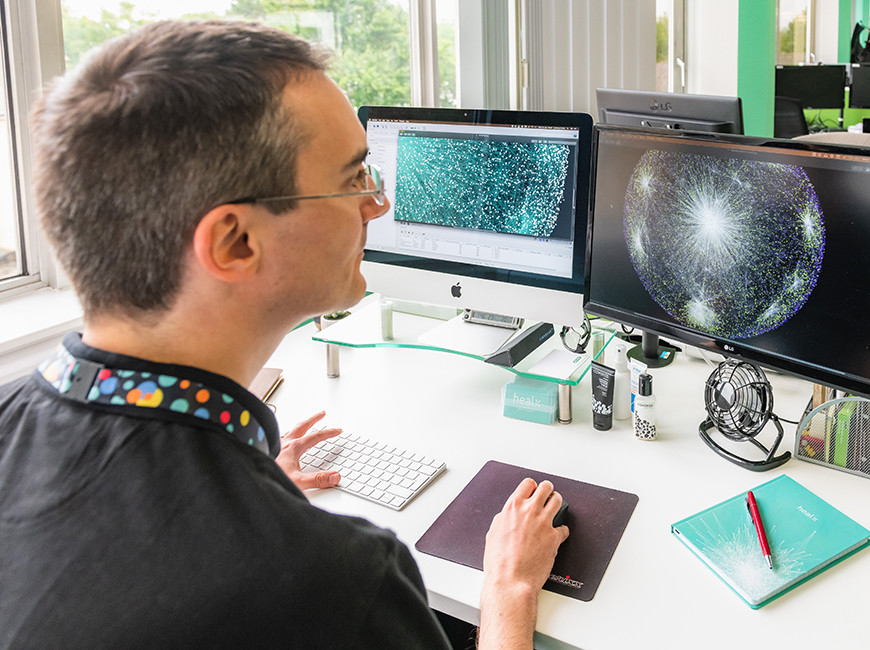

Andrea Pierleoni and his team at Healx use AI to come up with potential new treatments for patients with rare diseases. Despite this being an obviously positive application of machine learning, he is acutely aware of the need for AI to develop in an ethical way.

“We’re in a bit of a bubble in Cambridge, so people around here understand what we’re doing, and the area in which we work is something everyone can benefit from,” he says.

“Other areas cause more concern; AI is basically a set of very powerful tools, for good or bad. The negative perceptions around it arise because there’s always a lot more noise around bad things. But AI also has the potential to make major improvements to our lives. As with everything that’s powerful, it needs to be used correctly, which is why ethics are really important. Ethics start with researchers, they have to consider the implications of what they’re working on, and whether they will necessarily be positive.

“A good example is a model developed by Open AI, which had the potential to generate realistic fake news reports if it wasn’t used in the right way. They decided to only release part of the model because they knew that part could have very good effects.”

Andrea is head of AI at Healx, which was co-founded in 2014 by CEO Dr Tim Guilliams and Dr David Brown, the co-inventor of Viagra. The company aims to advance 100 rare disease treatments towards the clinic by 2025 using Healnet, its AI platform, which delivers data-driven treatment predictions that can shorten the discovery-to-clinic timeline to as little as 24 months. The company recently raised $56m in Series B financing which it will use to develop its drug pipeline and to launch a global Rare Treatment Accelerator programme, which will help it connect with more rare disease communities. “We work with drugs that are already approved, and I lead a team of people whose job it is to make the sense of all the information that we can digest around these drugs,” Andrea says.

AI is basically a set of very powerful tools, and can be used for good or bad

AI is basically a set of very powerful tools, and can be used for good or bad

“We use natural language processing to understand texts, looking at publications and other relevant documents, then extract the information and put it together. This is then used to make predictions about which drug may work for a type of condition.

“We’re giving patients with rarediseases access to medication where they might otherwise have nothing.”

Doesn’t AI need A LOT of data?

Artificial intelligence and big data often go hand-in-hand, but generating the kind of enormous datasets required to train an algorithm can come at a big financial and environmental cost. This is why Cambridge’s Prowler.io is doing things differently, working with what CEO Vishal Chatrath describes as “small data” to create its novel AI decision-making platform.

“Machine learning relies on lots and lots of data points and lots of information, and for that you need a lot of sensors,” he says. “We don’t believe in this approach, it’s costly and involves putting a lot of plastic and silicon in the world, which isn’t that great from an environmental impact perspective.

“Everyone talks about a future where we will need big data, but this is often driven by IT companies trying to sell server space, rather than what can add value for businesses.”

While most AI companies base their systems on deep neural networks, algorithms that learn as they are fed more and more data, Prowler’s system uses Gaussian theory, a type of probabilistic modelling that requires a lot less information.

Prowler’s trick has been to apply this theory at scale, something previously thought to be impractical, so that its AI can help the company’s clients, working in industries such as financial services and logistics, make smarter decisions around their processes and supply chains. Vishal believes moving away from the big data model can help smaller businesses embrace the positive impact of AI.

“Companies of all sizes should be able to benefit from AI,” he says. “People do think it’s just for the big corporates, but that all stems from this idea that you need lots of data and it’s going to be expensive.”

Everyone talks about a future where we will need big data, but this is often driven by IT companies trying to sell server space

Everyone talks about a future where we will need big data, but this is often driven by IT companies trying to sell server space

Prowler has enjoyed a stellar 2019, securing additional investment and major new clients. And while Vishal believes more and more people are opening their eyes to the potential of AI, he is aware that the industry still has some work to do on its image.

“I think one of the biggest problemsis around expectations and the hype that comes with AI, because if you build up expectations and then don’t meet them, you get into difficulties,” he says. “I think we’re now in a place where people are becoming more aware of the hype and having a healthy scepticism towards it.

“When we go and talk to customers we just ask them about their problems and how we can solve them. We take the AI out of the customer’s world, because the method we use to solve their problem isn’t particularly relevant – whether we’re using a hammer, a spade, or an AI is a moot point.”